Инструменты сайта

Основное

Содержание

Galois field GF(16) (version for coders)

The Galois field $ \mathbf{GF}(16) $ consists of $ 16 $ elements, which will be denoted by small Gothic letters $ \mathfrak a,\mathfrak b, \mathfrak c,\dots $ Every such an element is a four bits vector $$ \mathfrak a=(A_0,A_1,A_2,A_3) \ , \{A_j\}_{j=0}^3 \subset \{0,1\} \ . $$

Addition is defined as the component wise (bitwise) modulo $ 2_{} $ (XOR): $$ \mathfrak a+\mathfrak b=(A_0,A_1,A_2,A_3)\oplus (B_0,B_1,B_2,B_3)= $$ $$ =(A_0+B_0 \pmod {2},A_1+B_1 \pmod {2},\ A_2+B_2 \pmod {2},\ A_3+B_3 \pmod {2}) \ . $$

Multiplication is introduced in a more complicated manner. First of all, we want the result of multiplication of $ \mathfrak a $ by $ \mathfrak b $ to be a vector: $$ (A_0,A_1,A_2,A_3)\times (B_0,B_1,B_2,B_3)=(C_0,C_1,C_2,C_3) \in \mathbf{GF}(16) ; $$ i.e. the set $ \mathbf{GF}(16) $ should be closed (complete) with respect to multiplication. This demand blocks immediately a desire to address to the inner product. As for the cross product (vector product), it is defined only for the three-component vectors.

Multiplication

This operation will be introduced in a bypass route (Umveg), and for this route passes through the formalism of polynomials. Consider as yet arbitrary polynomail $ f(x)=x^4+a_1x^3+a_2x^2+a_3x+a_4 $ with the coefficients $ \{a_j\}_{j=1}^4 \subset \{0,1\} $. It is essential that the degree of this polynomial equals precisely $ 4_{} $. Let us correspond to any vector from $ \mathbf{GF}(16) $ a polynomial of the degree $ < 4 $: $$ \begin{array}{lll} \mathfrak a &=(A_0,A_1,A_2,A_3) \mapsto & a(x)= A_0x^3+A_1x^2+A_2x+A_3, \\ \mathfrak b &= (B_0,B_1,B_2,B_3) \mapsto & b(x)= B_0x^3+B_1x^2+B_2x+B_3 \ . \end{array} $$ Multiply these polynomials. The resulting polynomial $ a(x)b(x) $ possesses a degree $ \le 6 $. Calculate the remainder of the division of this polynomial by $ f_{}(x) $: $$ a(x)b(x)\equiv q(x)f(x) + \underbrace{{\tilde c}_0x^3+{\tilde c}_1x^2+{\tilde c}_2x+{\tilde c}_3}_{=r(x)} \ . $$ Here $ q_{}(x) $ stands for the quotient of the division (and we are not interested in it); as for the coefficients of the remainder $ r_{}(x) $ — we need them. Note that $ \deg r(x) \le 3 $, i.e. this polynomial is defined by $ 4_{} $ coefficients. Since the leading coefficient of $ f_{}(x) $ equals $ 1_{} $, the coefficients of $ r_{}(x) $ are necessarily integers: $ \{ {\tilde c}_j \}_{j=0}^3 \subset \mathbb Z $. We will take them modulo $ 2_{} $, i.e. replace the even coefficients by $ 0_{} $ and odd ones by $ 1_{} $. The resulting vector of modulized coefficients $$ \mathfrak c= (c_0,c_1,c_2,c_3)=({\tilde c}_0,{\tilde c}_1,{\tilde c}_2,{\tilde c}_3) \pmod{2} $$ will be taken as the product of the element $ \mathfrak a $ by element $ \mathfrak b $; and this fact will be written down as $ \mathfrak c=\mathfrak a \times \mathfrak b $.

Example. Let $ \mathfrak a=(1,0,1,1), \mathfrak b=(0,1,1,1) $ and $ f(x)=x^4+x^3+x $. One has $ a(x)=x^3+x+1,\ b(x)=x^2+x+1 $ with $$ a(x)b(x) \equiv x^5+x^4+2\,x^3+2\,x^2+2\,x+1 \ . $$ Divide the result by $ f_{}(x) $: $$ \begin{array}{rrrrrrr|l} x^5&+ x^4 &+2x^3 &+2x^2 &+2x &+1 && x^4+x^3+x\\ x^{5}&+x^4&&+x^2&& && \overline{ x \quad } \\ \hline &&2\,x^3&+x^2&+2\,x&+1 \end{array} \qquad \Rightarrow \quad c(x)= 2\,x^3+x^2+2\,x+1 $$ $$ \Rightarrow \quad \mathfrak a \times \mathfrak b=(0,1,0,1) \ . $$ Note that the final result is not altered if we reduce the coefficients of the product $ a(x)b(x) $ modulo $ 2_{} $: $$ x^5+x^4+2\,x^3+2\,x^2+2\,x+1 \equiv x^5+x^4+1 \pmod{2} , $$ the division of the latter by $ f_{}(x) $ yields as a remainder $ x^{2}+1 $. ♦

Further simplifications for the multiplication will be discussed ☟ LATER.

We will interprete the notation $$ u(x) \quad (\operatorname{modd} \ 2,f(x)) $$ as the result of the performance of the division operation of a polynomial $ u_{}(x) $ by $ f_{}(x) $ with the reduction of all (the intermediate and final) integers «up to their parity», i.e. modulo $ 2_{} $. We will refer to this remainder as the (double) moduli $ 2,f(x) $ remainder (residue).

If we select for $ f_{}(x) $ any other polynomial then the result of multiplication will be different. Nevertheless, for any selection, the multiplication is commutative: $ \mathfrak a \times \mathfrak b= \mathfrak b \times \mathfrak a $ since this statement is true for the operation of polynomial multiplication.

In foregoing sections I will also use instead of multiplication sign $ \times_{} $ also • or will skip this sign in a manner usual for numbers.

It is evident that the multiplication of any element of the field by a zero element $ \mathfrak o=(0,0,0,0) $ results in the zero element. It is also evident that, for any choice of $ f_{}(x) $, the element $ \mathfrak e=(0,0,0,1) $ plays a role of a unity element with respect to multiplication: $ \mathfrak e\cdot \mathfrak a = \mathfrak a \cdot \mathfrak e=\mathfrak a $. Indeed, one has $ a(x)\cdot 1 \equiv a(x) $. Consider further the multiplication by $ (0,0,1,0) $. $$ a(x) \cdot x \equiv A_0x^4+A_1x^3+A_2x^2+A_3x \equiv $$ $$\equiv A_0f(x)+ (A_1+A_0a_1)x^3+(A_2+A_0a_2)x^2+(A_3+A_0a_3)x+A_0a_4 \quad \Rightarrow $$ $$ \Rightarrow \quad \mathfrak (A_0,A_1,A_2,A_3) \cdot (0,0,1,0) = \left\{ \begin{array}{lr} (A_1,A_2,A_3,0) & if \ A_0=0, \\ (A_1+a_1,A_2+a_2,A_3+a_3, a_4) \pmod{2} & if \ A_0=1. \end{array} \right. $$ This means: the result is either a left-shift of the vector $ \mathfrak a $ or a similar shift accomplished by an addition of the coefficient vector of $ f_{} $.

Verify the distributivity: $ \mathfrak a (\mathfrak b+\mathfrak c)= \mathfrak a \mathfrak b+ \mathfrak a \mathfrak c $.

Division

Introduction of multiplication seems not to cause evident problems… The problems appears with division. We do not know its counterparts for vectors, however, we do know this word for polynomials. Thus, the polynomail $ x^3+1 $ is divisible by $ x+1 $: $$ \mathfrak a = x^3+1, \mathfrak b=x+1 $$ $$ \Rightarrow \quad \mathfrak a / \mathfrak b = \frac{x^3+1}{x+1}=\frac{(x+1)(x^2-x+1)}{x+1} \equiv x^2-x+1 \equiv_{2} x^2+x+1 \ . $$ Therefore one may steal this result for vectors $$(1,0,0,1)/(0,0,1,1)=(0,1,1,1) \ . $$ Note that it is independent (invariant) of the choice of the polynomial $ f_{}(x) $, enabled in the previous section for introducing the multiplication. Unfortunately, the extension of the polynomial division to any combination of vectors faces the problem: the polynomial $ x^3+1 $ is idivisible by $ x^2+1 $: $$ x^3+1\equiv x(x^2+1)+(x+1) \ . $$ Where should we go? One can think of the division with the «remainder» or, alternatively… By the way, what do we expect from this operation? — We do need such a correspondence between the odrered pair $ \mathfrak a , \mathfrak b $ and the element $ \mathfrak c $ that gives a fulfilment of the eqaulity $ \mathfrak a= \mathfrak b \cdot \mathfrak c $. A restriction appears immediately, namely: $ \mathfrak b \ne \mathfrak o=(0,0,0,0) $. Thus we wish the set $ \mathbf{GF}(16) \setminus \mathfrak o $ to be closed (complete) with respect to the division. In other words, we wish to get an analogue for the poperty of the divisibility of integers (or polynomials) with zero remainder. In the just treated example, for the pair $ \mathfrak a = (1,0,0,1), \mathfrak b = (0,0,1,1) $ this requirement was met; however, we do also need its fulfilment for the reordered pair $ \mathfrak b, \mathfrak a $ and to get a vector as a result.

To solve this problem, we should return to the definition of the multiplication suggested in the previous section. We are looking for the element $ \mathfrak c_1 $ satisfying the equality $ \mathfrak b= \mathfrak a \cdot \mathfrak c_1 $. If the multiplication is defined via double modulo $ 2, f(x) $ polynomial multiplication with $ f_{}(x) $ defined, for instance, like $ f(x)=x^4+x^3+x $, then the polynomial representation for $ \mathfrak c_1 $ can be found by virtue of the indeterminate coefficients method: $$ x+1 \equiv (x^3+1)(\hat c_0 x^3 +\hat c_1 x^2+\hat c_2 x+\hat c_3) \quad (\operatorname{modd} \ 2, x^4+x^3+x) \ . $$ Therefore we have just reduced the problem to that of polynomial division $$ x+1 \equiv (-\hat c_0+\hat c_1-\hat c_2+ \hat c_3)\,x^3+\hat c_0\,x^2+(\hat c_1-\hat c_0)x+\hat c_3 \pmod {2} \ .$$ Polynomial in the right-hand side coincides with the one from the left-hand side provided that the following system of congruences $$ 0\equiv_{2}-\hat c_0+\hat c_1-\hat c_2+ \hat c_3, \ 0 \equiv_{2} \hat c_0,\ 1 \equiv_{2} \hat c_1-\hat c_0,\ 1 \equiv_{2} \hat c_3 $$ is consistent. It is exactly the case with the following unique solution: $$ \hat c_0 =0, \hat c_1=1, \hat c_2=0, \hat c_3=1 \, . $$ Therefore, the result of division of $ \mathfrak b $ by $ \mathfrak a $ is: $$(0,0,1,1)/(1,0,0,1)=(0,1,0,1) \ . $$

Is this algorithm valid for arbitrary elements $ \mathfrak a $ and $ \mathfrak b $? — Not necessarily! Take $ \mathfrak a = (0,0,1,1), \mathfrak b=(0,0,1,0) $. The indeterminate coefficients method leads one to the inconsistent system of congruences. Why does it happen? What restriction should be imposed on the selection of the polynomial $ f_{}(x) $ that guarantees us the performability of the division for arbitrary pair of the field elements (excluding the division by zero element)?

To answer these questions, let us reduce the problem to a simpler one: in fact, we do need the operation of the element inversion: for any $ \mathfrak a \ne \mathfrak o $ find something denoted as $ \mathfrak a^{-1} $ with the property $ \mathfrak a^{-1} \mathfrak a = \mathfrak e $. Reformulate this demand into the polynomial formalism: $$ 1 \equiv \tilde c(x) a(x) \quad (\operatorname{modd} \ 2, f(x)) $$ or equivalently: $$ 1 \equiv_{2} \tilde c(x) a(x)+ q(x) f(x) \ ; $$ for some polynomial $ q_{}(x) $. Last formula has to be clarified. is is not only just a congruence modulo $ 2_{} $, it is the polynomial identity: the left-hand side polynomial identically equal to $ 1_{} $ coincide with the right-hand side polynomial. Consequently the coefficients of the powers of $ x_{} $ in the both polynomials should be equal.

To find the polynomial $ f_{}(x) $ satisfying the relationship $ c(x) a(x)+ q(x) f(x) \equiv_{2} 1 $ for arbitrary polynomial $ a(x)\not\equiv 0 $, $ \deg a(x)\le 3 $, let us address to the theory of polynomials over the infinite fields, i.e. with the coefficients from $ \mathbb R_{} $ or $ \mathbb C_{} $. For these fields, the equality $ c(x) a(x)+ q(x) f(x) \equiv 1 $ is known as the Bézout identity. It is valid if and only if the polynomials $ f_{}(x) $ are $ a_{}(x) $ relatively prime, i.e. iff their greatest common divisor identically equals $ 1_{} $: $ \operatorname{GCD}(f(x),a(x)) \equiv 1 $. If, in addition, we require the fulfillment of this condition for any polynomial $ a(x)\not\equiv 0 $, $ \deg a < \deg f $, then this requirement leads one to the property of the polynomial $ f_{}(x) $ known as the irreducibility over the field $ \mathbb R_{} $ or $ \mathbb C_{} $. It is equivalent to the demand that $ f_{}(x) $ should not be divisible by any polynomial of a lower degree except for the constant. Thus the polynomial $ f(x)=x^4+x^3+x $ is evidently reducible over $ \mathbb R_{} $ and over $ \mathbb C_{} $ : $$ f(x)\equiv x(x^3+x^2+1) ; $$ and this is exactly the explanation of the failure with its utilization for generating the division in the finite field $ \mathbf{GF}(16) $. Indeed, for the element $ \mathfrak b=(0,0,1,0) $, the corresponding polynomial is $ b(x)\equiv x $, but the identity $ c(x) b(x)+ q(x) f(x) \equiv 1 $ can not be satisfied for any choice of polynomials $ c_{}(x) $ and $ q_{}(x) $. For any pair of candidates, the left-hand side polynomial in this identity is divisible by $ x_{} $ while that one from the right-hand side is not divisible by $ x_{} $.

Let us go further. The irreducibility property is defined subject to the set in which the polynomial is treated. If we deal with the polynomials with integer coefficients and expect the result of division to be a polynomial over inegers then the irreducibility should be treated over $ \mathbb Z_{} $. The irreducible over $ \mathbb Z_{} $ polynomials are not rarity even if we impose an additional restriction on their coefficients — we are looking for those from the set $ \{0,1\} $. Here are some irreducible polynomials of the degree $ 4_{} $: $$ x^4+1,\ x^4+x+1,\ x^4+x^3+1,\ x^4+x^3+x^2+1; $$ as for polynomials $$ x^4+x^3+x+1,\ x^4+x^2+1,\ x^4+x $$ they are reducible over $ \mathbb Z_{} $.

The irreducibility of the polynomial $ f_{}(x) $ over integers is a necessary condition for generation of a finite field which is closed with respect to the division operation. Nevertheless, it is not a sufficient one. This is because of an additional restriction imposed on the polynomial coefficients — they all are either $ 0_{} $ or $ 1_{} $. The Bézout identity should be treated modulo $ 2_{} $. In other words, even if the polynomial $ f_{}(x) $ is irreducible over $ \mathbb Z_{} $, the identity $$ c(x) a(x)+ q(x) f(x) \equiv 1 $$ might be not satisfied for any choice of polynomials $ c_{}(x) $ and $ q_{}(x) $ provided that we treat the coefficients of the left-hand side polynomial modulo $ 2_{} $. For instance, for the polynomials $ f(x)=x^4+1, a(x)=x+1 $, the last identity is not valid: substitution $ x=1 $ in it yields $ 2\,c(x)+2\, q(x) \equiv_2 0 $ and not $ 1_{} $, as desirable.

Therefore the set of irreducible polynomials of the degree $ 4_{} $ with the coefficients from $ \{0,1\} $ should be reduced to the one containing polynomials irreducible modulo $ 2_{} $. Among the irreducible over $ \mathbb Z $ polynomials listed in the previous example the following ones should be rejected: $$ x^4+1 \equiv_{2} (x^2+1)^2 \equiv_{2} (x+1)^4,\ x^4+x^3+x^2+1 \equiv_{2} (x+1)(x^3+x+1) \ . $$ Exchaustive search results in only $ 3_{} $ quartic polynomials irreducible modulo $ 2_{} $: $$ x^4+x+1,\ x^4+x^3+1,\ x^4+x^3+x^2+x+1 \ . $$

It can proved the existence of the irreducible polynomials of any degree; it is also known their amount for any degree specialization. An extra problem is the construction of these polynomials for high degrees ( $ 64, 128 $ etc.) The practice of implementing the error correcting codes narrows the set of potential field generating polynomials to sparse irreducible polynomials, i.e. those ones with a large amount of zero terms (coefficients). V. the samples ☞ HERE.

We have just completed the construction of $ \mathbf{GF}(16) $. The division operation in this field can be introduced if the generating polynomial for the multiplication is chosen among the three above mentioned. To complete our definition of the finite field, we have to present a constructive procedure for the division operation. Since the division $ \mathfrak b / \mathfrak a $ can be reduced to the multiplication $ \mathfrak b \cdot \mathfrak a^{-1} $, let us go back to the Bézout identity which laid at the basis of the inversion operation $$ (A_0x^3+A_1x^2+A_2x+A_3)^{-1} \quad (\operatorname{modd} \ 2, f(x)) \ . $$

There exists several approaches for resolving this identity. They are happen to be constructive but a little bit cumbersome in presentation. The major one is based on the Euclidean algorithm for the computation of the greatest common divisor for polynomials $ f_{}(x) $ and $ a_{}(x) $. As a matter of fact, we do not inetersted in $ \operatorname{GCD} (f(x),A_0x^3+A_1x^2+A_2x+A_3) $ since for any values of the coefficients $ \{A_j\}_{j=0}^3 $ it equals $ 1_{} $. Where do we take this certainty from? — It is caused by the irreducibility of $ f_{}(x) $. Actually, we are interested just only in the expressions for the quotients appeared in the body of the Euclidean algorithm.

Example. For $ f(x)=x^4+x+1, a(x)=x^3+x+1 $ one has: $$ \left. \begin{array}{lcl} f(x) &\equiv & x\cdot a(x) + (x^2+1); \\ a(x) &\equiv & x \cdot (x^2+1)+ 1 \end{array} \right\} \pmod{2} $$ The unity in the last formula represents the value of $ \operatorname{GCD} (f(x),a(x)) $. Using the expressions for the quotients $$ q_1(x)=x,\ q_2(x)=x, $$ compose the expression for the continuant: $$ b(x)= q_1q_2+1=x\cdot x +1 \equiv_{_2} x^2+1 \ . $$ Check: $$ (x^3+x+1)(x^2+1) \equiv x^5+2\,x^3+x^2+x+1 \equiv 1 \quad (\operatorname{modd} \ 2,f(x)) \ . $$ Thus, the inversion for $ \mathfrak a=(1,0,1,1) $ with respect to the double moduli equals $ (0,1,0,1) $.

This element is defined uniquely. If its is desirable to obtain its universal representation, i.e. for arbitrary $ \mathfrak a= (A_0,A_1,A_2,A_3) $ and $ f(x)=x^4+a_1x^3+a_2x^2+a_3x+a_4 $ (and continuant seems to look too complicated), I can provide you with an alternative determinantal representation: $$ b(x)= \left|\begin{array}{ccccccl} 1&a_1&a_2&a_3&a_4& & \\ &1&a_1&a_2&a_3&a_4&\\ &&1&a_1&a_2&a_3&0\\ &&&A_0&A_1&A_2&1\\ &&A_0&A_1&A_2&A_3&x\\ &A_0&A_1&A_2&A_3&0&x^2\\ A_0&A_1&A_2&A_3&0&0&x^3 \end{array}\right|_{7\times 7} \pmod{2} , $$ (all the unspecified entries are just $ 0_{} $). Expand the determinant by its last column — the $ 6_{} $th order minors corresponding to $ x^3,x^2,x,1 $ are the components of the vector $ \mathfrak a^{-1} $.

Is the order of the last determinant too high? — Diminish it up to that of the order $ \deg f_{} $. Compute $$ a(x),\ x\cdot a(x),\ x^2 \cdot a(x),\ x^3 \cdot a(x) \quad (\operatorname{modd} \ 2,f(x)) \ , $$ recursively on setting $ a_0(x)\equiv a(x)= A_0x^3+A_1x^2+A_2x+A_3 $: $$ \left. \begin{array}{lll} a_1(x)\equiv x a_0(x) & \equiv & K_0x^3+K_1x^2+K_2x+K_3,\\ a_2(x)\equiv x a_1(x) & \equiv & L_0x^3+L_1x^2+L_2x+L_3 ,\\ a_3(x)\equiv x a_2(x) & \equiv & M_0x^3+M_1x^2+M_2x+M_3 \ , \end{array} \right\} \quad (\operatorname{modd} \ 2,f(x)) \ . $$ Place the coefficients of $ \{a_j(x) \}_{j=0}^3 $ into the $ 4_{} $th order matrix: $$ \mathbf{A}= \left(\begin{array}{llll} M_0 & M_1 & M_2 & M_3 \\ L_0 & L_1 & L_2 & L_3 \\ K_0 & K_1 & K_2 & K_3 \\ A_0 & A_1 & A_2 & A_3 \end{array} \right) $$ replace the last column by $ [x^3,x^2,x,1]^{\top} $. Compute the determinant — this is the polynomial we are looking for. Thus, for the present expample, one gets: $$ \mathfrak a=a_0(x)=x^3+x+1,\ a_1(x)=x^2+1,\ a_2(x)=x^3+x,\ a_3(x)=x^2+x+1 $$ and $$ \mathfrak a^{-1}= \left|\begin{array}{cccl} 0 & 1 & 1 & x^3 \\ 1 & 0 & 1 & x^2 \\ 0 & 1 & 0 & x \\ 1 & 0 & 1 & 1 \end{array} \right| \pmod{2} . $$ ♦

The matrix $ \mathbf A_{} $ from the last example will appear once again in one of foregoing ☞ SECTIONS.

Resume: in the field $ \mathbf{GF}(16) $ the multiplication operation can be introduced in $ 3_{} $ different ways depending on the choice of the irreducible modulo $ 2_{} $ quartic polynomial. We will refer to this polynomial as a generating polynomial of the field. Subsequently, in the present page, when referring to an irreducible polynomial we will consider irreducibility modulo $ 2_{} $.

Is there any principal difference between the fields generated by these triple of polynomials? — As a matter of fact, no. It can be proved that all the Galois fields of the same order are isomorphic, i.e. there exists a bijection between any pair of such fields and this bijection keeps the results of addition and multiplication. Verification of this claim for $ \mathbf{GF}(16) $ v. ☞ HERE.

Example. For the field $ \mathbf{GF}(16) $ generated by $ f(x)=x^4+x+1 $, find the quotient of the division of $ \mathfrak b = (0,0,1,1) $ by $ \mathfrak a= (1,1,1,1) $.

Solution. Here $ a(x)=x^3+x^2+x+1, b(x)=x+1 $. The Bézout identity $$ u(x)a(x)+v(x) f(x) \equiv 1 $$ (modulo $ 2_{} $) is fulfilled for $ u(x)=x^3, v(x)=\ldots $ Therefore $$ a^{-1}(x) \quad (\operatorname{modd} \ 2,f(x)) = x^3 $$ and $$ b(x) a^{-1}(x) \quad (\operatorname{modd} \ 2,f(x)) = x^3+x+1 \ . $$ ♦

Exponentiation

The multiplication operation is clarified. Almost clarified. As a matter of fact, there is an aspect of this operation in the finite field which does not have similarity in an infinite field. Consider an arbitrary element $ \mathfrak a \ne \mathfrak o $ of the field and raise it to powers: $$ \mathfrak a^{0}=\mathfrak e, \mathfrak a^{1}, \mathfrak a^2,\dots $$ All the entries of this sequence are the elements from $ \mathbf{GF}(16) $. Since the latter contain a finite number of elements, this sequence should become periodical (perhaps after some initial steps).

Example. For $ f(x)=x^4+x+1 $ one has: $$ \begin{array}{ll} for \quad a(x)=x^3+x+1: & 1,\ x^3+x+1,\ x^3+1, x^3+x^2,\ x^3+x^2+1,\ x^2+x,\ x^3+x^2+x+1, \\ \quad & x+1,\ x^3+x^2+x,\ x^3,\ x^2+x+1,\ x^2,\ x^3+x,\ x,\ x^2+1,\ 1,\dots \\ for \quad \quad a(x)=x^2+x+1: & 1,\ x^2+x+1,\ x^2+x,\ 1,\dots \\ for \quad \quad a(x)=x^3+x: & 1,\ x^3+x,\ x^3,\ x^3+x^2+x+1,\ x^3+x^2,\ 1,\dots \end{array} $$ It can be verified that for any nonzero and nonunity element of the field, its powers form a periodic sequence with periodicity equal to one of the following numbers $ 3,5,15 $. What are these numbers? — They are the divisors of $ 2^4-1 $. ♦

The observed fact demonstrates an occurence of the general property of finite fields. Since this set, on ejecting the zero element, becomes a group with respect to multiplication, the order of the group should be divisible by the order of any its element. The following equality is valid: $$ \mathfrak a^{15}=\mathfrak e \quad for \quad \forall a \ne \mathfrak o \ , $$ (for the general case, it is also reffered to as the generalized Fermat theorem). We are interested in the generator of thie group, i.e. such an element whose powers form the whole group. It is called the primitive element of the finite field. It can be proved that such an element exists, the number of primitive elements is also known — and this number is invariant on the choice of generating polynomial of the field. For instance, the field $ \mathbf{GF}(16) $ contains $ \phi(15)= 8 $ primitive elements; here $ \phi_{} $ denotes the Euler totient function. In the last example, the element $ (1,0,1,1) $ is a primitive one while the elements $ (0,1,1,1) $ and $ (1,0,1,0) $ are not primitive.

Expressions for the primitive elements depend on the choice of the generating polynomial $ f_{}(x) $.

Denote a particular primitive element by $ \mathfrak A $. For the field element $ \mathfrak a \ne \mathfrak o $ we will refer to the minimal possible exponent $ k \in \mathbb N $ such that $ \mathfrak A^k =a $ as the discrete logarithm of $ \mathfrak a $ base the primitive element $ \mathfrak A $. In comparison with its counterpart from the real numbers theory, the discrete logarithm function behaves chaotically, that means: the knowledge of its value for one element $ \mathfrak a $ does not help in estimation for any other element $ \mathfrak b $. In principle, it is possible to write down the explicit expression for the discrete logarithm as a function of the entries of the element $ \mathfrak a=(A_0,A_1,A_2,A_3) $; however, for the general case such formulas are cumbersome and useless.

Example. $ f(x)=x^4+x+1 $. $ \mathfrak A $ stands for $ (0,0,1,0)=x $.

"Logarithm Table".

$$ \begin{array}{l|rrrr|c|c|c} \mathfrak A^0 & & & & 1 & 0001 & & \\ \mathfrak A^1 & & & \mathfrak A & & 0010 & \Pi & f=0 \\ \mathfrak A^2 & & \mathfrak A^2 & & & 0100 & \Pi & f=0 \\ \mathfrak A^3 & \mathfrak A^3 & & & & 1000 & & f_3=0 \\ \hline \mathfrak A^4 & & & \mathfrak A & +\mathfrak e & 0011 & \Pi & f=0 \\ \mathfrak A^5 & & \mathfrak A^2 & +\mathfrak A & & 0110 & & f_4=0 \\ \mathfrak A^6 & \mathfrak A^3 & +\mathfrak A^2 & & & 1100 & & f_3=0\\ \mathfrak A^7 & \mathfrak A^3 & & + \mathfrak A & +\mathfrak e & 1011 & \Pi & f^{\ast}=0 \\ \hline \mathfrak A^8 & & \mathfrak A^2 & & +\mathfrak e & 0101 & \Pi & f=0 \\ \mathfrak A^9 & \mathfrak A^3 & & +\mathfrak A & & 1010 & & f_3=0 \\ \mathfrak A^{10} & & \mathfrak A^2 &+\mathfrak A & +\mathfrak e & 0111 & & f_4=0 \\ \mathfrak A^{11} & \mathfrak A^3 & +\mathfrak A^2 & +\mathfrak A & & 1110 & \Pi & f^{\ast}=0 \\ \hline \mathfrak A^{12} & \mathfrak A^3 & +\mathfrak A^2 & + \mathfrak A& +\mathfrak e & 1111 & & f_3=0 \\ \mathfrak A^{13} & \mathfrak A^3 & +\mathfrak A^2 & & +\mathfrak e & 1101 & \Pi & f^{\ast}=0 \\ \mathfrak A^{14} & \mathfrak A^3 & & & +\mathfrak e & 1001 & \Pi & f^{\ast}=0 \end{array} $$

It turns out that, in fact, $ \mathfrak A=x $ happens to be a primitive element of the field. The letter $ \Pi $ in the fourth column of the table marks the primitive elements of the field. The last column should be ignored until we will be in need of its containment in one of the foregoing ☟ SECTIONS. Surprisingly, that the entries of the second column of this table do not change if we take for $ \mathfrak A $ any of the elements $ x^2, x^4, x^8 $.

Since this field will be oftenly used in further speculation, I present here one extra table —

"Summation table".

$$ \begin{array}{l||l|l|l|l|l|l|l|l|l|l|l|l|l|l|l} & \mathfrak A^0 & \mathfrak A^1 & \mathfrak A^2 & \mathfrak A^3 & \mathfrak A^4 & \mathfrak A^5 & \mathfrak A^6 & \mathfrak A^7 & \mathfrak A^8 & \mathfrak A^9 & \mathfrak A^{10} & \mathfrak A^{11} & \mathfrak A^{12} & \mathfrak A^{13} & \mathfrak A^{14} \\ \hline \hline \mathfrak A^0 & \mathfrak o & \mathfrak A^4 & \mathfrak A^8 & \mathfrak A^{14} & \mathfrak A & \mathfrak A^{10} & \mathfrak A^{13} & \mathfrak A^{9} & \mathfrak A^{2} & \mathfrak A^{7} & \mathfrak A^{5} & \mathfrak A^{12} & \mathfrak A^{11} & \mathfrak A^{6} & \mathfrak A^{3} \\ \hline \mathfrak A^1 & & \mathfrak o & \mathfrak A^5 & \mathfrak A^9 & \mathfrak A^0 & \mathfrak A^2 & \mathfrak A^{11} & \mathfrak A^{14} & \mathfrak A^{10} & \mathfrak A^{3} & \mathfrak A^{8} & \mathfrak A^{6} & \mathfrak A^{13} & \mathfrak A^{12} & \mathfrak A^{7} \\ \hline \mathfrak A^2 & & & \mathfrak o & \mathfrak A^{6} & \mathfrak A^{10} & \mathfrak A^{1} & \mathfrak A^{3} & \mathfrak A^{12} & \mathfrak A^0 & \mathfrak A^{11} & \mathfrak A^{4} & \mathfrak A^{9} & \mathfrak A^{7} & \mathfrak A^{14} & \mathfrak A^{13} \\ \hline \mathfrak A^3 & & & & \mathfrak o & \mathfrak A^{7} & \mathfrak A^{11} & \mathfrak A^{2} & \mathfrak A^{4} & \mathfrak A^{13} & \mathfrak A^{1} & \mathfrak A^{12} & \mathfrak A^{5} & \mathfrak A^{10} & \mathfrak A^{8} & \mathfrak A^0 \\ \hline \mathfrak A^4 & & & & & \mathfrak o & \mathfrak A^8 & \mathfrak A^{12} & \mathfrak A^3 & \mathfrak A^5 & \mathfrak A^{14} & \mathfrak A^2 & \mathfrak A^{13} & \mathfrak A^6 & \mathfrak A^{11} & \mathfrak A^9 \\ \hline \mathfrak A^5 & & & & & & \mathfrak o & \mathfrak A^9 & \mathfrak A^{13} & \mathfrak A^4 & \mathfrak A^6 & \mathfrak A^0 & \mathfrak A^3 & \mathfrak A^{14} & \mathfrak A^7 & \mathfrak A^{12} \\ \hline \mathfrak A^6 & & & & & & & \mathfrak o & \mathfrak A^{10} & \mathfrak A^{14} & \mathfrak A^{5} & \mathfrak A^{7} & \mathfrak A & \mathfrak A^{4} & \mathfrak A^{0} & \mathfrak A^{8} \\ \hline \mathfrak A^7 & & & & & & & & \mathfrak o & \mathfrak A^{11} & \mathfrak A^0 & \mathfrak A^{6} & \mathfrak A^{8} & \mathfrak A^2 & \mathfrak A^{5} & \mathfrak A \\ \hline \mathfrak A^8 & & & & & & & & & \mathfrak o & \mathfrak A^{12} & \mathfrak A & \mathfrak A^{7} & \mathfrak A^{9} & \mathfrak A^{3} & \mathfrak A^{6} \\ \hline \mathfrak A^9 & & & & & & & & & & \mathfrak o & \mathfrak A^{13} & \mathfrak A^{2} & \mathfrak A^{8} & \mathfrak A^{10} & \mathfrak A^{4} \\ \hline \mathfrak A^{10} & & & & & & & & & & & \mathfrak o & \mathfrak A^{14} & \mathfrak A^{3} & \mathfrak A^{9} & \mathfrak A^{11} \\ \hline \mathfrak A^{11} & & & & & & & & & & & & \mathfrak o & \mathfrak A^0 & \mathfrak A^{4} & \mathfrak A^{10} \\ \hline \mathfrak A^{12} & & & & & & & & & & & & & \mathfrak o & \mathfrak A^{1} & \mathfrak A^{5} \\ \hline \mathfrak A^{13} & & & & & & & & & & & & & & \mathfrak o & \mathfrak A^{2} \\ \hline \mathfrak A^{14} & & & & & & & & & & & & & & & \mathfrak o \end{array} $$ ♦

Resume: any nonzero element of the field $ \mathbf{GF}(16) $ admits three representations (hypostases1)):

1. This is a row-vector $ (A_0, A_1, A_2, A_3) $, the element of a vector space.

2. This is a polynomial $ A_0x^3+ A_1x^2+A_2x+ A_3 $.

3. This is an exponent $ \mathfrak A^k $ of a primitive element of the field.

What one of this representaions is the most convenient for applications? — The answer depends on a particular problem. Thus, to add the field elements is simpler given their representations in the vector form 1 or polynomial form 2 (compare with an alternative given by the last table). On the other side, if the logarithm table is known for represenation of any element $ (A_0, A_1, A_2, A_3) $ in powers of a primitive one, the the multiplication operation is simpler for the representation 3 : $$ \quad \mathfrak a= (A_0, A_1, A_2, A_3) = \mathfrak A^k,\ \mathfrak b= (B_0, B_1, B_2, B_3) = \mathfrak A^{\ell} \quad \Rightarrow \quad \mathfrak a \cdot \mathfrak b = \mathfrak A^{k+\ell} \ . $$ The exponent value $ k+\ell $ is taken as it is for the case $ k+\ell<15 $ and modulo $ 15_{} $ for $ k+\ell\ge 15 $.

Example. Let us get back to the example of multiplication of $ \mathfrak a=(A_0,A_1,A_2,A_3) $ by $ \mathfrak b=(1,1,0,1) $ for generating polynomial $ f(x)=x^4+x+1 $.

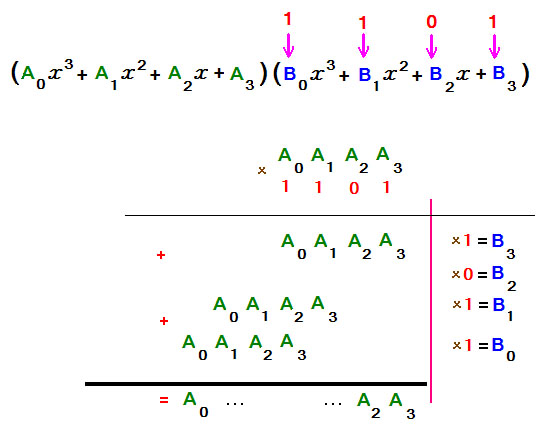

Solution 1 . Multiplication of the corresponding polynomials $ a(x)=A_0x^3+A_1x^2+A_2x+A_3 $ and $ b(x)=x^3+x^2+1 $ is traditionally performed as the long multiplication

i.e. we deal only with the multiples of the row of the coefficients of $ a_{}(x) $. Since the coefficients of the polynomial $ b_{}(x) $ are just only either $ 0_{} $ or $ 1_{} $, the multiplictaion is reduced to the manipulation with the row $ (A_0,A_1,A_2,A_3) $ — it may be shifted to one or more positions to the left with respect to the previous row. The corresponding bits are sum up modulo $ 2_{} $ (carry-less summation). The obtained result, namely the product $ a(x) b(x) $, is not yet a final one: we look for the remainder on its division by $ f_{}(x) $ (modulo $ 2_{} $). For this aim, the multiplication procedure should be organized организовать in another way: $$ a(x)(B_0x^3+B_1x^2+B_2x+B_3)= (a(x)B_0)x^3+(a(x)B_1)x^2+(a(x)B_2)x+(a(x)B_3)= $$ $$=\left\{\left[\left(a(x)B_0 \right)\cdot x +a(x)B_1 \right]\cdot x+a(x)B_2 \right\}\cdot x+a(x)B_3 $$ $$=\left\{\left[\left(a(x)\cdot x\right) + a(x) \right]\cdot x+0 \right\}\cdot x+a(x) \ , $$ with reduction of the result of each involved operations $ \big( \ast \big)\cdot x $, $ \big[ \ast \big]\cdot x $, $ \big\{ \ast \big\}\cdot x $ moduli $ 2,f(x) $: $$ a(x) b(x) \quad (\operatorname{modd} \ 2,f(x)) =$$ $$= \left\{\left[\left(a(x)\cdot x \quad (\operatorname{modd} \ 2,f(x)) \right) + a(x) \right]\cdot x \quad (\operatorname{modd} \ 2,f(x)) \right\}\cdot x \quad (\operatorname{modd} \ 2,f(x)) +a(x) \ . $$ The more so beccause the multiplication by $ x_{} $ moduli $ 2,f(x) $ admits a simple programming realization (v. the end of section ☞ MULTIPLICATION ).

Solution 2 . Let us solve the present example using the logarithm table from the previous example. For the primitive element $ \mathfrak A = x $ one has $ \mathfrak b=(1,1,0,1)= \mathfrak A^{13} $ while a similar representation for the element $ \mathfrak a $ can be suggested only for its specialization. Let, for instance, $ \mathfrak a=(1,0,1,1) $. Then $ \mathfrak a = \mathfrak A^{7} $. Consequently, $$ \mathfrak a \cdot \mathfrak b=\mathfrak A^{7}\cdot \mathfrak A^{13}=\mathfrak A^{20}=\mathfrak A^{5} \ , $$ and, on looking into the table, one gets $ (0,1,1,0) $. ♦

What a simplification yields the exponential representation of the element for its inversion! ![]() — Here you are:

$$ \mathfrak a^{15} = \mathfrak e \quad \Rightarrow \quad \mathfrak a^{-1}=\mathfrak a^{14},\ \mathfrak a^{-2}=\mathfrak a^{13},\dots \quad for \quad \forall \mathfrak a \ne \mathfrak o \ . $$

Словом, наличие заранее вычисленной «таблицы логарифмов» существенно упрощает жизнь вычислителю. Хуже, если такой таблицы нет или вычисление (хранение) ее слишком затратно…

— Here you are:

$$ \mathfrak a^{15} = \mathfrak e \quad \Rightarrow \quad \mathfrak a^{-1}=\mathfrak a^{14},\ \mathfrak a^{-2}=\mathfrak a^{13},\dots \quad for \quad \forall \mathfrak a \ne \mathfrak o \ . $$

Словом, наличие заранее вычисленной «таблицы логарифмов» существенно упрощает жизнь вычислителю. Хуже, если такой таблицы нет или вычисление (хранение) ее слишком затратно…

Example. Compute $ x^{-11} \quad (\operatorname{modd} \ 2,x^6+x^5+1) $.

Solution. We take without prove that $ f_{}(x)=x^6+x^5+1 $ is an irreducible polynomial, and $ x_{} $ is a primitive element of the field $ \mathbf{GF}(64) $. The generalized Fermat theorem yields: $$ x^{-11} \equiv x^{2^6-1-11}\equiv x^{52} \quad (\operatorname{modd} \ 2,x^6+x^5+1) \ . $$ Utilize now the square-and-multiply algorithm: convert $ 52 $ into the binary form $ \underline{52}_{_{10}}=\underline{110100}_{_2} $ and compute the remainders $$ \begin{array}{|c|c|c|c|c|c|c|} \hline j & 1 & 2 & 3 & 4 & 5 &6 \\ \hline 52 & 1 & 1 & 0 & 1 & 0 &0 \\ \hline r_j(x) & x & x^2\times x & x^6 & (x^5+1)^2\times x & (x^4+x^3+x^2+1)^2 & (x^4+x^2+x+1)^2 \\ & \equiv_{_{2,f}} x & \equiv_{_{2,f}} x^3 & \equiv_{_{2,f}} x^5+1 & \equiv_{_{2,f}} x^4+x^3+x^2+1 & \equiv_{_{2,f}} x^4+x^2+x+1 & \equiv_{_{2,f}} x^5+x^4+x \\ \hline \end{array} $$ Answer. $ x^5+x^4+x $.

Linear equations

Let the elements of the field $ \mathfrak a_1,\dots,\mathfrak a_N, \mathfrak b $ be given. Linear equation over the field $ \mathbf{GF}(16) $ with respect to the indeterminates (variables) $ \mathfrak x_1,\dots,\mathfrak x_N $ is defined similarly to the case of infinite field, i.t. by the formula $$ \mathfrak a_1 \mathfrak x_1+\dots+\mathfrak a_N \mathfrak x_N = \mathfrak b \ . $$ This object is defined correctly since the multiplication operation in finite field is already introduced. Solution of this equation over the field $ \mathbf{GF}(16) $ is called any set of elements from $ \mathbf{GF}(16) $ $$ \mathfrak x_1=\alpha_1,\dots, \mathfrak x_N=\alpha_N \ , $$ which converts the equation into the true equality.

System of linear equation over $ \mathbf{GF}(16) $ is the collection of several linear equations from the indeterminates (variables) $ \mathfrak x_1,\dots,\mathfrak x_N $ $$ \left\{ \begin{array}{lllll} \mathfrak a_{11} \mathfrak x_1 &+\mathfrak a_{12}\mathfrak x_2&+ \ldots&+\mathfrak a_{1N}\mathfrak x_N &=\mathfrak b_1,\\ \mathfrak a_{21}\mathfrak x_1 &+\mathfrak a_{22}\mathfrak x_2&+ \ldots&+\mathfrak a_{2N}\mathfrak x_N &=\mathfrak b_2,\\ \dots & & & & \dots \\ \mathfrak a_{M1}\mathfrak x_1 &+\mathfrak a_{M2}\mathfrak x_2&+ \ldots&+\mathfrak a_{MN}\mathfrak x_N &=\mathfrak b_M. \end{array} \right. $$ Here $ \left\{\mathfrak a_{j k} \right\}_{j=1,\dots,M \atop k=1,\dots,N } $ and $ \{ \mathfrak b_{j} \}_{j=1,\dots,M} $ are treated as fixed (specialized) elements of $ \mathbf{GF}(16) $; they are called the system coefficients.

Let us discuss a possibility of the transfer of the results of the linear equations theory from the infinite fields ($ \mathbb Q $ or $ \mathbb R_{} $ or $ \mathbb C_{} $) into the finite ones. First of all, it should be noted that one of the cases possible for the infinite fields, does not happen for finite ones: the linear system over the finite field cannot possess infinite number of solutions. Therefore, in principle, one of the possible approaches for the linear system solving consists of exhaustive search in the set of all the combinations from $ 16_{} $ field elements. So, for the system of two equations in two indeterminates $$ \left\{ \begin{array}{lcl} \mathfrak a_{11} \mathfrak x_1 +\mathfrak a_{12}\mathfrak x_2 &= & \mathfrak b_1, \\ \mathfrak a_{21} \mathfrak x_1 +\mathfrak a_{22}\mathfrak x_2 &= & \mathfrak b_2, \end{array} \right. $$ one has to check $ 16^2 $ combinations for the pair $ (\mathfrak x_1,\mathfrak x_2) $. On keeping this method in mind, we will focus ourselves onto the constructive approaches which can borrowed from the infinite fields.

Are the Cramer formulas valid? — Multiply the fisrt equation of the system by $ \mathfrak a_{22} $ and subtract from it ( $ \equiv_{_2} $ add to it) the second one multiplied by $ \mathfrak a_{12} $: $$ \overbrace{(\mathfrak a_{11}\mathfrak a_{22}- \mathfrak a_{12} \mathfrak a_{21})}^{\Delta}\mathfrak x_1= \overbrace{\mathfrak a_{22} \mathfrak b_1 - \mathfrak a_{12} \mathfrak b_2}^{\Delta_1} \ . $$ Similarly, one gets: $$ (\mathfrak a_{11}\mathfrak a_{22}- \mathfrak a_{12} \mathfrak a_{21})\mathfrak x_2= \overbrace{-\mathfrak a_{21} \mathfrak b_1 + \mathfrak a_{11} \mathfrak b_2}^{\Delta_2} \ . $$ If the element $ \Delta= \mathfrak a_{11}\mathfrak a_{22}- \mathfrak a_{12} \mathfrak a_{21} $ differs from zero then it admits the inversion. Consequently, the solution to the system is unique and can be written down as $$ \mathfrak x_1=\Delta_1\cdot \Delta^{-1},\quad \mathfrak x_2=\Delta_2\cdot \Delta^{-1} \ . $$

Example. Solve the system of equations $$ \left\{ \begin{array}{lcl} (0,1,0,0) \mathfrak x_1 +(0,1,0,1)\mathfrak x_2 &= & (1,0,0,0), \\ (1,1,1,1) \mathfrak x_1 +(0,0,1,1)\mathfrak x_2 &= & (1,1,1,0). \end{array} \right. $$ in the field $ \mathbf{GF}(16) $ generated by $ f(x)=x^4+x+1 $.

Solution. One has2) $$ \Delta\equiv x^2(x+1)-(x^2+1)(x^3+x^2+x+1)\equiv x^3+x,\ $$ $$ \Delta_1 \equiv x^3(x+1)-(x^3+x^2+x)(x^2+1) \equiv x^3,\quad \Delta_2 \equiv x^2(x^3+x^2+x)-(x^3+x^2+x+1)x^3 \equiv x^3+x^2 \ , $$ and $$ \mathfrak x_1\equiv x^3(x^3+x)^{-1}\equiv x^3(x^3+x^2) \equiv x^3+x=(1,0,1,0), \quad \mathfrak x_2\equiv (x^3+x^2)(x^3+x)^{-1} \equiv x^3+x^2+x+1=(1,1,1,1) \ . $$ ♦

Solve the system of equations $$ \mathbf{a)} \left\{ \begin{array}{lcl} (0,1,0,0) \mathfrak x_1 +(1,0,1,0)\mathfrak x_2 &= & (1,0,0,0), \\ (1,1,1,1) \mathfrak x_1 +(0,0,1,1)\mathfrak x_2 &= & (1,1,0,1); \end{array} \right. \qquad \mathbf{b)} \left\{ \begin{array}{lcl} (0,1,0,0) \mathfrak x_1 +(1,0,1,0)\mathfrak x_2 &= & (0,1,0,1), \\ (1,1,1,1) \mathfrak x_1 +(0,0,1,1)\mathfrak x_2 &= & (1,1,0,1). \end{array} \right. $$ in the field from the previous example.

We can now introduce the determinant, as a polynomial from the elements of the field. Compared to the classical definition, in the $ \mathbf{GF}(16) $ one may not care of the signs of terms in the determinant expansion. Thus, the third order determinant can be introduced by the formula $$ \left| \begin{array}{lll} \mathfrak a_{11} & \mathfrak a_{12} & \mathfrak a_{13}\\ \mathfrak a_{21} & \mathfrak a_{22} & \mathfrak a_{23} \\ \mathfrak a_{31} & \mathfrak a_{32} & \mathfrak a_{33} \end{array} \right| =\mathfrak a_{11} \mathfrak a_{22} \mathfrak a_{33}+\mathfrak a_{12}\mathfrak a_{23} \mathfrak a_{31} + \mathfrak a_{21}\mathfrak a_{32} \mathfrak a_{13} + \mathfrak a_{31} \mathfrak a_{22} \mathfrak a_{13} + \mathfrak a_{21}\mathfrak a_{12}\mathfrak a_{33} + \mathfrak a_{11} \mathfrak a_{32} \mathfrak a_{23} \ . $$

With the determinant function being introduced, one may think of the notion of the matrix composed from the elements of $ \mathbf{GF}(16) $ aiming at representing the solution to the linear system via the inverse matrix. On postponing the development of this idea for the future, let us take another look on the solution to the previous example.

Consider the multiplication of the element $ \mathfrak a_1= (0,1,0,0) $ by an arbitrary element $ \mathfrak x =(x_0,x_1,x_2,x_3) $ of the same field3): $$ x^2(x_0x^3+x_1x^2+x_2x+x_3) \equiv x_0(x^2+x)+x_1(x+1)+x_2x^3+x_3x^2 \equiv x_2 x^3+(x_0+x_3)x^2+(x_0+x_1)x+x_1 \ . $$ Therefore, multiplication by $ (0,1,0,0) $ defines a mapping in the field $ \mathbf{GF}(16) $: $$ \mathfrak x=(x_0,x_1,x_2,x_3) \ \mapsto \ \mathfrak y =(x_2,x_0+x_3,x_0+x_1,x_1) \ . $$ Evidently, this is a linear mapping, namely the operator in the field. Therefore, one can define this operator using the operator matrix: $$ \mathfrak x \ \mapsto \ \mathfrak y=(x_0,x_1,x_2,x_3) \left(\begin{array}{cccc} 0 & 1 & 1 & 0 \\ 0 & 0 & 1 & 1 \\ 1 & 0 & 0 & 0 \\ 0 & 1 & 0 & 0 \end{array} \right) \ . $$

The traditional form of the operator matrix application consists in its multiplication from the left-hand side the column of vector coordinates. As for the Coding Theory, it is used to tackle with the row vectors. This difference is not a principal one: the two approaches are connected by the operation of matrix transpose.

Consider the multiplication of the element $ \mathfrak x $ by another element, say, by $ \mathfrak a_2=(0,1,0,1) $. Similar speculations lead to a different matrix: $$ \mathfrak x \ \mapsto \ \mathfrak y=(x_0,x_1,x_2,x_3) \left(\begin{array}{cccc} 1 & 1 & 1 & 0 \\ 0 & 1 & 1 & 1 \\ 1 & 0 & 1 & 0 \\ 0 & 1 & 0 & 1 \end{array} \right) \ . $$ Now, one can rewrite the first equation from the system of the previous example $$ (0,1,0,0) \mathfrak x_1 +(0,1,0,1)\mathfrak x_2 = (1,0,0,0) $$ into the matrix form: on setting $ \mathfrak x_1=(x_{10},x_{11},x_{12},x_{13}) $, $ \mathfrak x_2=(x_{20},x_{21},x_{22},x_{23}) $ and using the componentwise (bitwise) summation modulo $ 2_{} $ (XOR) one arrives at: $$ (x_{10},x_{11},x_{12},x_{13}) \underbrace{\left(\begin{array}{cccc} 0 & 1 & 1 & 0 \\ 0 & 0 & 1 & 1 \\ 1 & 0 & 0 & 0 \\ 0 & 1 & 0 & 0 \end{array} \right)}_{=\mathbf{A}_{11}} \oplus (x_{20},x_{21},x_{22},x_{23}) \underbrace{\left(\begin{array}{cccc} 1 & 1 & 1 & 0 \\ 0 & 1 & 1 & 1 \\ 1 & 0 & 1 & 0 \\ 0 & 1 & 0 & 1 \end{array} \right)}_{=\mathbf{A}_{12}} =(1,0,0,0) \ . $$ Resume: linear equation with respect to $ 2_{} $ indeterminates from the field $ \mathbf{GF}(16) $ is equivalent to the system of $ 4_{} $ linear equations with respect to $ 8_{} $ indeterminate bits. In the first representation, the equation is tackled as the double moduli $ 2,f(x) $ conguence, while in the second every equation of the system is modulo $ 2_{} $ congruence. We will refer to the latter as the equations over GF(2).

The second equation from the sytem can be treated in a similar way:

$$

(x_{10},x_{11},x_{12},x_{13})

\underbrace{\left(\begin{array}{cccc}

0 & 0 & 0 & 1 \\

1 & 0 & 0 & 1 \\

1 & 1 & 0 & 1 \\

1 & 1 & 1 & 1

\end{array}

\right)}_{=\mathbf{A}_{21}}

\oplus

(x_{20},x_{21},x_{22},x_{23})

\underbrace{\left(\begin{array}{cccc}

1 & 0 & 1 & 1 \\

1 & 1 & 0 & 0 \\

0 & 1 & 1 & 0 \\

0 & 0 & 1 & 1

\end{array}

\right)}_{=\mathbf{A}_{22}} =(1,1,1,0) \ ; $$

and the whole system of $ 2_{} $ equations over $ \mathbf{GF}(16) $ is equivalent to the system of $ 8_{} $ equations over $ \mathbf{GF}(2) $.

The latter can be naturally partioned and written down blockwise4):

$$

\left\{

\begin{array}{lcl}

\mathfrak x_1 \mathbf{A}_{11} +\mathfrak x_2 \mathbf{A}_{12}&= & \mathfrak b_1, \\

\mathfrak x_1\mathbf{A}_{21} + \mathfrak x_2 \mathbf{A}_{22} &= & \mathfrak b_2,

\end{array}

\right. \qquad \iff \qquad (\mathfrak x_1,\mathfrak x_2)_{1\times 8}

\left(

\begin{array}{ll}

\mathbf A_{11} & \mathbf A_{21} \\

\mathbf A_{12} & \mathbf A_{22}

\end{array}

\right)_{8 \times 8}= (\mathfrak b_1,\mathfrak b_2)_{1\times 8} \ .

$$

We intend to solve this system using its block structure.

Multiply the first matrix equation by the matrix $ \mathbf A_{22} $ from the right-hand side, while the second one by the matrix $ \mathbf A_{12} $ (also from the right-hand side), and subtract one obtained equation from the other one:

$$ \mathfrak x_1 (\mathbf A_{11}\mathbf A_{22}- \mathbf A_{21}\mathbf A_{12})+

\mathfrak x_2 (\mathbf A_{12}\mathbf A_{22}- \mathbf A_{22}\mathbf A_{12}) =\mathfrak b_1

\mathbf A_{22}- \mathfrak b_2 \mathbf A_{12} \ .

$$

The coefficient matrix of $ \mathfrak x_2 $ is a zero one since $ \mathbf A_{12}\mathbf A_{22}=\mathbf A_{22}\mathbf A_{12} $… Stop.

Stop!

Matrix multiplication is (usually) noncommutative! For arbitrary square matrices $ \mathbf A_{} $ and $ \mathbf B $, the equality $ \mathbf A \mathbf B = \mathbf B \mathbf A $ generically is not valid! Just in case, let us verify this property for our particulat pair of matrices5):

$$

\mathbf A_{12}\mathbf A_{22}=\left(\begin{array}{cccc}

2 & 2 & 2 & 1 \\

1 & 2 & 2 & 1 \\

1 & 1 & 2 & 1 \\

1 & 1 & 1 & 1

\end{array}

\right)\equiv_{_2}

\underbrace{\left(\begin{array}{cccc}

0 & 0 & 0 & 1 \\

1 & 0 & 0 & 1 \\

1 & 1 & 0 & 1 \\

1 & 1 & 1 & 1

\end{array}

\right)}_{=\mathbf{A}_{21}} = \mathbf A_{22}\mathbf A_{12} \ .

$$

It happens that the matrices commute! Moreover, absolutely unexpectedly the result of multiplication corresponds to the result of multiplication of the correspondien field elements:

$$ (0,1,0,1) \leftrightarrow \mathbf A_{12},\ (0,0,1,1) \leftrightarrow \mathbf A_{22} \quad

\Rightarrow (0,1,0,1)\cdot (0,0,1,1)=(1,1,1,1) \leftrightarrow \mathbf A_{21} \ . $$

Verify the commutativity for all the involved matrices — it is fulfilled ![]() One step further. If $ \mathbf A_{12}\mathbf A_{22}=\mathbf A_{22}\mathbf A_{12} $, we can terminate correctly our algorithm on solving the

linear system partioned into blocks with the aid of matrix formalism:

$$

\mathfrak x_1 (\mathbf A_{11}\mathbf A_{22}- \mathbf A_{21}\mathbf A_{12})=\mathfrak b_1

\mathbf A_{22}- \mathfrak b_2 \mathbf A_{12} \quad \Rightarrow \quad

\mathfrak x_1=(\mathfrak b_1 \mathbf A_{22}- \mathfrak b_2 \mathbf A_{12})(\mathbf A_{11}\mathbf A_{22}- \mathbf A_{21}\mathbf A_{12})^{-1} \ .

$$

Short pause: Does the inversion of the matrix exist? Verify:

$$

\left(\begin{array}{cccc}

0 & 1 & 1 & 0 \\

0 & 0 & 1 & 1 \\

1 & 0 & 0 & 0 \\

0 & 1 & 0 & 0

\end{array}

\right)

\left(\begin{array}{cccc}

1 & 0 & 1 & 1 \\

1 & 1 & 0 & 0 \\

0 & 1 & 1 & 0 \\

0 & 0 & 1 & 1

\end{array}

\right)-

\left(\begin{array}{cccc}

0 & 0 & 0 & 1 \\

1 & 0 & 0 & 1 \\

1 & 1 & 0 & 1 \\

1 & 1 & 1 & 1

\end{array}

\right)

\left(\begin{array}{cccc}

1 & 1 & 1 & 0 \\

0 & 1 & 1 & 1 \\

1 & 0 & 1 & 0 \\

0 & 1 & 0 & 1

\end{array}

\right)\equiv_{_2}

$$

$$

\equiv_{_2}

\overbrace{\left(\begin{array}{cccc}

1 & 1 & 1 & 1 \\

1 & 1 & 1 & 0 \\

0 & 1 & 1 & 1 \\

1 & 0 & 1 & 0

\end{array}

\right)}^{= \mathbf A_{00}} \ .

$$

Since $ \det \mathbf A_{00}=-1\equiv_{2} +1 $, the inverse matrix exists:

$$

\mathbf A_{00}^{-1}=

\left(\begin{array}{cccc}

1 & 0 & 1 & 0 \\

0 & 1 & 0 & 1 \\

1 & 0 & 1 & 1 \\

1 & 1 & 0 & 0

\end{array}

\right) \ .

$$

The last step:

$$

\mathfrak x_1=(\mathfrak b_1 \mathbf A_{22}- \mathfrak b_2 \mathbf A_{12})\mathbf A_{00}^{-1}

=((1,0,1,1)-(0,0,1,1))\mathbf A_{00}^{-1}=(1,0,1,0) \ .

$$

The result coincides with the answer to the example.

One step further. If $ \mathbf A_{12}\mathbf A_{22}=\mathbf A_{22}\mathbf A_{12} $, we can terminate correctly our algorithm on solving the

linear system partioned into blocks with the aid of matrix formalism:

$$

\mathfrak x_1 (\mathbf A_{11}\mathbf A_{22}- \mathbf A_{21}\mathbf A_{12})=\mathfrak b_1

\mathbf A_{22}- \mathfrak b_2 \mathbf A_{12} \quad \Rightarrow \quad

\mathfrak x_1=(\mathfrak b_1 \mathbf A_{22}- \mathfrak b_2 \mathbf A_{12})(\mathbf A_{11}\mathbf A_{22}- \mathbf A_{21}\mathbf A_{12})^{-1} \ .

$$

Short pause: Does the inversion of the matrix exist? Verify:

$$

\left(\begin{array}{cccc}

0 & 1 & 1 & 0 \\

0 & 0 & 1 & 1 \\

1 & 0 & 0 & 0 \\

0 & 1 & 0 & 0

\end{array}

\right)

\left(\begin{array}{cccc}

1 & 0 & 1 & 1 \\

1 & 1 & 0 & 0 \\

0 & 1 & 1 & 0 \\

0 & 0 & 1 & 1

\end{array}

\right)-

\left(\begin{array}{cccc}

0 & 0 & 0 & 1 \\

1 & 0 & 0 & 1 \\

1 & 1 & 0 & 1 \\

1 & 1 & 1 & 1

\end{array}

\right)

\left(\begin{array}{cccc}

1 & 1 & 1 & 0 \\

0 & 1 & 1 & 1 \\

1 & 0 & 1 & 0 \\

0 & 1 & 0 & 1

\end{array}

\right)\equiv_{_2}

$$

$$

\equiv_{_2}

\overbrace{\left(\begin{array}{cccc}

1 & 1 & 1 & 1 \\

1 & 1 & 1 & 0 \\

0 & 1 & 1 & 1 \\

1 & 0 & 1 & 0

\end{array}

\right)}^{= \mathbf A_{00}} \ .

$$

Since $ \det \mathbf A_{00}=-1\equiv_{2} +1 $, the inverse matrix exists:

$$

\mathbf A_{00}^{-1}=

\left(\begin{array}{cccc}

1 & 0 & 1 & 0 \\

0 & 1 & 0 & 1 \\

1 & 0 & 1 & 1 \\

1 & 1 & 0 & 0

\end{array}

\right) \ .

$$

The last step:

$$

\mathfrak x_1=(\mathfrak b_1 \mathbf A_{22}- \mathfrak b_2 \mathbf A_{12})\mathbf A_{00}^{-1}

=((1,0,1,1)-(0,0,1,1))\mathbf A_{00}^{-1}=(1,0,1,0) \ .

$$

The result coincides with the answer to the example.

Matrices

Interpretation of the Galois field formalism into that of matrices gives rise to the hypothesis of the existence of a closer relations between these languages. It seems that $ \mathbf{GF}(16) $ isomorphic to a substet of the $ 4_{} $th order (square) matrices. The problem is to find such a bijection: $$ \mathfrak a=(A_0,A_1,A_2,A_3) \ \leftrightarrow \ a(x)=A_0x^3+A_1x^2+A_2x+A_3 \ \leftrightarrow \ \mathbf{A} \ , $$ that admits the results of addition and multiplication. The last row of the matrix $ \mathbf{A} $ is divined from the treated examples: it coincides with $ \mathfrak a $: $$ \mathbf{A}= \left(\begin{array}{llll} \ast & \ast & \ast & \ast \\ \ast & \ast & \ast & \ast \\ \ast & \ast & \ast & \ast \\ A_0& A_1 & A_2 & A_3 \end{array} \right) \ . $$ What are the other rows? — These are the rows of coefficient of polynomials $$ a(x) x,\ a(x)x^2,\ a(x)x^3 \quad (\operatorname{modd} \ 2,x^4+x+1) , $$ i.e. the modulo $ 2_{} $ coefficients of the remainders of polynomials on division by $ f(x)=x^4+x+1 $. These coefficients are placed into the matrix in the following order: $$ \begin{array}{ll} \mathbf{A}= \left(\begin{array}{llll} \ast & \ast & \ast & \ast \\ \ast & \ast & \ast & \ast \\ \ast & \ast & \ast & \ast \\ A_0& A_1 & A_2 & A_3 \end{array} \right) & \begin{array}{lc} \leftarrow a(x)x^3 & (\operatorname{modd} \ 2,x^4+x+1) \\ \leftarrow a(x)x^2 & (\operatorname{modd} \ 2,x^4+x+1) \\ \leftarrow a(x)x & (\operatorname{modd} \ 2,x^4+x+1) \\ \leftarrow a(x) & (\operatorname{modd} \ 2,x^4+x+1) \end{array} \\ { } \qquad \quad \ \uparrow \quad \ \ \uparrow \quad \ \ \uparrow \quad \ \uparrow & \\ { } \qquad \quad \ x^3 \quad \ x^2 \quad \ x \ \quad 1 & \end{array} \ . $$ Where does this matrix appear from? Let us return to the problem of organization of polynomial multiplication moduli $ 2,f_{}(x) $ which has been posed ☝ ABOVE. This time, we organize computation of $ a(x)b(x) \ (\operatorname{modd} \ 2,f(x)) $ for $$ a(x)=A_0x^3+A_1x^2+A_2x+A_3, \ B(x)=B_0x^3+B_1x^2+B_2x+B_3 $$ in the other scheme: $$ \begin{array}{ll} a(x)b(x) \ (\operatorname{modd} \ 2,f(x)) & = \left[ a(x)x^3 \ (\operatorname{modd} \ 2,f(x))\right]B_0+ \\ &+\left[ a(x)x^2 \ (\operatorname{modd} \ 2,f(x))\right]B_1+ \\ &+\left[ a(x)x \ (\operatorname{modd} \ 2,f(x))\right]B_2+\\ &+\left[ a(x) \right]B_3. \end{array} $$ Coefficients of polynomials standing in every pair of brackets $ [ \mbox{ } \ ] $ constitute the rows of the matrix $ \mathbf{A} $. Consequently, the linear combination of these remainders on multiplying them by the scalars $ B_0,B_1,B_2,B_3 $ corresponds to the multiplication of the row $ (B_0,B_1,B_2,B_3) $ by the matrix $ \mathbf{A} $: $$ (B_0,B_1,B_2,B_3) \mathbf{A} \ . $$ The matrix $ \mathbf A $ is constructed bottom-up, starting from the last row: its third row $$ \mathbf{A}= \left(\begin{array}{llll} M_0 & M_1 & M_2 & M_3 \\ L_0 & L_1 & L_2 & L_3 \\ K_0 & K_1 & K_2 & K_3 \\ A_0 & A_1 & A_2 & A_3 \end{array} \right) $$ is obtained from the fourth one via the algorithm of multiplication by x and computation of remainder on division by $ f(x)=x^3+a_1x^2+a_2x+a_3 $ (modulo $ 2_{} $): $$ \mathfrak (K_0,K_1,K_2,K_3) = \left\{ \begin{array}{lr} (A_1,A_2,A_3,0) & if \ A_0=0, \\ (A_1+a_1,A_2+a_2,A_3+a_3, a_4) \pmod{2} & if \ A_0=1. \end{array} \right. $$ The second row is obtained from the third one in a similar way, and so forth.

Example.

$$ \mathfrak a = \mathfrak e=(0,0,0,1) \ \leftrightarrow \ \mathbf{A}=E=\left(\begin{array}{llll}

1 & 0 & 0 & 0 \\

0 & 1 & 0 & 0 \\

0& 0 & 1 & 0 \\

0& 0 & 0 & 1

\end{array}

\right) , $$

i.e. the unity element of the field corresponds to the identity $ 4_{} $th order matrix. Next,

$$ (0,0,1,0) \ \leftrightarrow \ \mathbf{F}= \left(\begin{array}{llll}

0 & 0 & 1 & 1 \\

1 & 0 & 0 & 0 \\

0& 1 & 0 & 0 \\

0& 0 & 1 & 0

\end{array}

\right)

,\ (0,0,1,1) \ \leftrightarrow \ \left(\begin{array}{llll}

1 & 0 & 1 & 1 \\

1 & 1 & 0 & 0 \\

0& 1 & 1 & 0 \\

0& 0 & 1 & 1

\end{array}

\right),\ (0,1,0,0) \ \leftrightarrow \ \left(\begin{array}{llll}

0 & 1 & 1 & 0 \\

0 & 0 & 1 & 1 \\

1& 0 & 0 & 0 \\

0& 1 & 0 & 0

\end{array}

\right),\dots $$

Verify now that the third matrix is the exponent of the first one, namely

$ \mathbf{F}^2 \pmod{2} $. The second matrix is also a power of the first one: it equals $ \mathbf{F}^4 \pmod{2} $.

Why does it happen? ![]() — Not yet clear. However, we do not come into the contradiction with the с «Logarithm table» from Section

☝

EXPONENTIATION:

$$ {} \mbox{ if }\ (0,0,1,0) \leftrightarrow \ x \mbox{ then } \ (0,0,1,1) \leftrightarrow \ x^4 \quad \mbox{ and } \quad (0,1,0,0) \leftrightarrow \ x^2 \ . $$

♦

— Not yet clear. However, we do not come into the contradiction with the с «Logarithm table» from Section

☝

EXPONENTIATION:

$$ {} \mbox{ if }\ (0,0,1,0) \leftrightarrow \ x \mbox{ then } \ (0,0,1,1) \leftrightarrow \ x^4 \quad \mbox{ and } \quad (0,1,0,0) \leftrightarrow \ x^2 \ . $$

♦

What a wonderful matrices have been obtained? To identify them, let us begin with the simplest matrix, namely $ \mathbf{F} $, providing the multiplication of the field element by the polynomial identically equal to $ x_{} $. What is its structure in the case of arbitrary field $ \mathbf{GF}(2^n) $ with multiplication moduli $$ 2, f(x)=x^n+a_1x^{n-1}+a_2x^{n-2}+\dots+ a_n \ ? $$ — This can be easily established: $$ \mathbf{F}= \left( \begin{array}{lllllll} a_1 & a_{2} & a_{3} & \dots & & a_{n-1} & a_n \\ 1 & 0 & 0 &\dots & 0 & 0 & 0 \\ 0 & 1 & 0 & \dots & 0 & 0 & 0 \\ \vdots& && \ddots && & \vdots \\ 0 & 0 & 0 & \dots & 1 & 0 & 0 \\ 0 & 0 & 0 & \dots & 0 & 1 & 0 \end{array} \right)_{n \times n} \ . $$ This matrix6) is known as the Frobenius matrix or the companion matrix of the polynomial $ f_{}(x) $.

It is more complicated to detect a nature (origin) of the general form of the matrix $ \mathbf{A} $ corresponding $ a(x)=A_0x^3+A_1x^2+A_2x+A_3 $. In the case of infinite fields ( $ \mathbb Q $ or $ \mathbb R_{} $ or $ \mathbb C_{} $), this matrix lies at the basis of the Bézout method of computation of the resultant of polynomials $ f_{}(x) $ and $ a(x) $. What do we know about these matrices? If $ \mathbf{B} $ is such a matrix corresponding to the polynomial $ b(x)=B_0x^3+B_1x^2+B_2x+B_3 $ then $$ \mathbf{A} \mathbf{B}=\mathbf{B} \mathbf{A} \ , $$ i.e. the Bézout matrices commutate. Moreover, the common result for these products is the Bézout matrix corresponding to polynomial $ a(x)b(x) $ (and for its remainder on division by $ f_{}(x) $). The determinant of the matrix $ \mathbf{A} $ equals the resultant of $ f_{}(x) $ and $ a(x) $; it does not vanish iff the polynomials $ f_{}(x) $ and $ a_{}(x) $ are relatively prime.

Let us now transfer these results into a finite field. Commutativity of matrix multiplication is remained valid when we pass to the congruences modulo $ 2_{} $ with the resulting matrix corresponding to $ a(x)b(x) \ (\operatorname{modd} \ 2,x^4+x+1) $. Therefore, the established correspondence $$ \mathfrak a=(A_0,A_1,A_2,A_3) \ \leftrightarrow \ a(x)=A_0x^3+A_1x^2+A_2x+A_3 \ \leftrightarrow \ \mathbf{A} $$ keeps also the result of multiplication: the product of the elements corresponds the product of matrices. Therefore, the element $ \mathfrak a^{-1} $ correspods to $ \mathbf{A}^{-1} $. This fact can be confirmed additionally via representation of $ \mathfrak a^{-1} $ in the polynomial form given at the end of Section ☝ DIVISION. Indeed, $$ { }_{}\mbox{ if } \quad \mathbf{A}^{-1}= \left(\begin{array}{llll} \ast & \ast & \ast & \ast \\ \ast & \ast & \ast & \ast \\ \ast & \ast & \ast & \ast \\ B_0& B_1 & B_2 & B_3 \end{array} \right) \quad \mbox{ then } \quad \mathfrak a^{-1} =B_0x^3+B_1x^2+B_2x+ B_3 \, . $$ On the other hand, the elements of the last row of the matrix $ \mathbf{A}^{-1} $ are the cofactors to the elements of the last column of the matrix $ \mathbf{A} $, therefore $$ \mathfrak a^{-1}= \left|\begin{array}{llll} M_0 & M_1 & M_2 & x^3 \\ L_0 & L_1 & L_2 & x^2 \\ K_0 & K_1 & K_2 & x \\ A_0 & A_1 & A_2 & 1 \end{array} \right| \ . $$

Will the given bijection keep the correspondence between the matrix $ \mathbf{A}+ \mathbf{B} $ and the field element $ \mathfrak a+\mathfrak b $? — Yes, since the remainder of the sum of polynomials coincides with the sum of remainders: $$ (a(x)+b(x))x^k \quad (\operatorname{modd} \ 2,x^4+x+1) $$ $$ \equiv a(x) x^k (\operatorname{modd} \ 2,x^4+x+1) + b(x) x^k \quad (\operatorname{modd} \ 2,x^4+x+1) $$ $ \forall k \in \{0,1,2,3\} $. This completes the proof of the isomorphism.

From this follows that a matrix $ \mathbf{A} $ corresponding to the polynomial $ a(x)=A_0x^3+A_1x^2+A_2x+A_3 $ can be represented as a linear combination of the exponents of the companion matrix, i.e. a polynomial of the matrix $ \mathbf{F} $ $$ \mathbf{A} = A_0 \mathbf{F}^3\oplus A_1 \mathbf{F}^2\oplus A_2\mathbf{F}\oplus A_3 E = a (\mathbf{F}) \ ; $$ while, on the other hand, it equals some exponent of that companion matrix: $$ \mathbf{A} = \mathbf{F}^m \quad \mbox{ for some } \quad m\in \{0,1,2,\dots \} . $$ The sequence $ \mathbf{F}^0=E, \mathbf{F}^1, \mathbf{F}^2,\dots, $ is a cyclic one: $ \mathbf{F}^{15}=E $.

Resume: In addition to the declared ☝ ABOVE represenations for the nonzero element of the Galois field, consider an extra one.

4. The nonzero element of $ \mathbf{GF}(16) $ is an exponent of the companion matrix $ \mathbf{F} $ or the polynomial $ a(\mathbf{F}) $ ( $ \deg a(x) \le 3 $) from this matrix. Addition is defined elementwise modulo $ 2_{} $, and multiplication coincides with the matrix multiplication7).

In such an interpretation, there is no necessity in introduction of the Galois field multiplication operation via the formalism of polynomial division by the generating polynomial $ f_{}(x) $. By the way, were is it hidden in the new, i.e. matrix, formalism? — Its coefficients constitute the first row of the companion matrix $ \mathbf{F} $, and this is their unique occurence.

Restoration of lost information...

In the preceding sections, we have collected algebraic formalism enough to explain the ideas underying the error correcting algorithms.

Assume that we have a row of $ 20_{} $ bits $ (x_1,\dots,x_{20}) $ which is involved in the communication process — either is sent off in some communication channel or is written down to some storage medium.

Assume this process is subjected to the noise, and some of the bits might be lost (or damaged, corrupted). The problem is to manage the communication process in such a way that permits one to restore the true data (correct the errors).

To solve this problem, one is in need of resources. These resources first include an extra service bits addition (to the information bits $ (x_1,\dots,x_{20}) $); with involvement of these service bits into the communication process. These resorses also include a processing units which organize the filling the service bits with the content depending on the content of information bits with aid of further restoration of these bits probably lost within the communication process.

... with known positions of errors

We demonstrate this with an example. Calculate the checksum $$ s=\sum_{i=1}^{20} x_i \pmod{2} \ . $$ The row $ (x_1,\dots,x_{20},s) $ participates in the communication process. If during this process the exactly one bit is suspicied to become erroneous and the position $ j_{} $ of this bit is known , then the restoration of this probably damaged bit is possible via the formula $$ x_j = s+ \sum_{i=1 \atop i\ne j}^{20} x_i \pmod{2} \ . $$ This formula is the universal one: it is applicable for any value of the subscript $ j_{} $.

Essential difficulties arrise when the problem is to restore several damaged bits. If their positions could be predicted a priori, the algorithm would be trivial. For instance, the two checksums $$ \left\{ \begin{array}{lcl} s_1&=& x_1+x_3+\dots+x_{19}, \\ s_2 &=&x_2+x_4+\dots+x_{20} \end{array} \right. \pmod{2} $$ permit one to correct any pair of bits $ x_{j} $ and $ x_{k} $ when the subscripts $ j_{} $ and $ k_{} $ are of distinct parities8). However, the available hardware rarely assures us in such an opportunity. Therefore, if in the process of communication of the row $ (x_1,\dots,x_{20},s_1,s_2) $, the suspicion arrises that the errors occur in positions $ j_{} $ and $ k_{} $ of the same parity, we cannot correct these bits on the base of the computed checksums.

Что делать? Очевиден подход к решению задачи: нужно выписать набор (систему) линейных соотношений (уравнений) относительно величин $ x_1,\dots,x_{20} $ так, чтобы имелась возможность разрешить полученную систему относительно любых двух величин $ x_{j} $ и $ x_{k} $. Проблема за малым — как построить такие соотношения?

We will solve the problem via its complication. Assume the possibility of the fault of $ \le 8_{} $ of information bits in the row $ X=(x_1,\dots,x_{20}) $. In order to organize the restoration procedure for these bits, let us accomplish our information row with the $ 8_{} $ service bits row $ S= (s_1,\dots,s_8) $. We will call them syndromes, and will compute them as linear combinations of information bits $$ s_i=a_{i1} x_1+\dots+a_{i,20}x_{20} \pmod{2} \quad npu \quad i\in \{1,\dots,8\} $$ for some — as yet to be determined — coefficients $ \{a_{i\ell}\} \subset \{0,1\} $.

Make an important assumption: let the $ 8_{} $ potentially erroneous bits be located not absolutely randomly within the information row, but situated in just only two of $ 4_{} $-bits blocks (rows) $ X_j $ and $ X_k $ composing the information row $ X=(X_1,X_2,X_3,X_4,X_5) $.

Rewrite now the $ 8_{} $ linear relatioships for syndromes we are searching for, in the matrix form: $$ X_{1\times 20} \mathbf{A}_{20\times 8}=S_{1\times 8} $$ and treat this equation blockwise. So, if $$ \mathbf{A}= \left( \begin{array}{ll} \mathbf{A}_{11} & \mathbf{A}_{12} \\ \mathbf{A}_{21} & \mathbf{A}_{22} \\ \mathbf{A}_{31} & \mathbf{A}_{32} \\ \mathbf{A}_{41} & \mathbf{A}_{42} \\ \mathbf{A}_{51} & \mathbf{A}_{52} \end{array} \right) \quad and \quad S=(S_1,S_2), $$ then $$ \left\{ \begin{array}{lcl} X_1\mathbf{A}_{11}\oplus X_2\mathbf{A}_{21}\oplus X_3\mathbf{A}_{31} \oplus X_4\mathbf{A}_{41} \oplus X_5\mathbf{A}_{51}&=&S_1, \\ X_1\mathbf{A}_{12}\oplus X_2\mathbf{A}_{22}\oplus X_3\mathbf{A}_{32} \oplus X_4\mathbf{A}_{42} \oplus X_5\mathbf{A}_{52}&=&S_2. \end{array} \right. $$ The problem now is to select the matrix $ \mathbf{A} $ composing blocks such that the last system be resolvable with respect to any pair of information blocks $ X_{j} $ and $ X_k $.

For this aim, let us choose these blocks $ \{\mathbf{A}_{i,\ell}\} $ from the set $ \{ \mathbf F^{m} \}_{m=0}^{15} $ of powers of the companion matrix for an irreducible modulo $ 2_{} $ polynomial $ f(x)=x^4+x+1 $, introduced in the previous section. Since these matrices commute, one may deal with them as with (rational or real or complex) numbers. Thus, if we take the system in the form9) $$ \left\{ \begin{array}{lcl} X_1E + X_2E+ X_3 E + X_4E + X_5E&=&S_1, \\ X_1E+ X_2\mathbf F+ X_3\mathbf F^2 + X_4\mathbf F^3 + X_5\mathbf F^4&=&S_2, \end{array} \right. $$ then its solution with respect to any pairs of information blocks $ X_{j} $ and $ X_{k} $ is possible with the aid of the algorithm which will be illuminated for the case $ j=2, k=4 $:

1. Multiply the first equation from the right-hand side by the matrix $ \mathbf F^3 $ and add to the second one: $$ X_{2}( \mathbf F + \mathbf F^3 ) =S_2+S_1\mathbf F^3 +X_1(E+\mathbf F^3)+X_3(\mathbf F^2 + \mathbf F^3 )+X_5(\mathbf F^4 + \mathbf F^3) \ ; $$

2. Find the inversion for the matrix $ \mathbf F + \mathbf F^3 $: $$( \mathbf F + \mathbf F^3 )^{-1}= \left( \begin{array}{llll} 1 & 1 & 1 & 1\\ 1 & 1 & 1 & 0\\ 0 & 1 & 1 & 1 \\ 1 & 0 & 1 & 0 \end{array} \right)^{-1} = \left( \begin{array}{llll} 1 & 0 & 1 & 0\\ 0 & 1 & 0 & 1\\ 1 & 0 & 1 & 1 \\ 1 & 1 & 0 & 0 \end{array} \right)=\mathbf F^2 + \mathbf F^3 \ ; $$ 3. Multiply the last obtained equation by this matrix from the right-hand side: $$ X_{2}=S_2(\mathbf F^2 + \mathbf F^3)+S_1(\mathbf F^5 + \mathbf F^6) +X_1(\mathbf F^2+\mathbf F^3+\mathbf F^5+\mathbf F^6)+X_3(\mathbf F^4 + \mathbf F^6 )+X_5(\mathbf F^5 + \mathbf F^7); $$ 4. On evaluation of $ X_{2} $, the value for $ X_{4} $ can be obtained from the first of initial equations.

Example. Let $$ X_1=(1,0,1,0),\ X_2=(0,1,1,1),\ X_3=(0,1,0,1),\ X_4=(1,0,1,0),\ X_5=(0,0,1,1) \ . $$ Compute the syndromes $ S_1 $ and $ S_2 $: $$ S_1=(0,0,0,1), S_2=(1,0,0,1) \ . $$ If the vectors $ X_2 $ and $ X_4 $ are lost, then the preceding algorithm yields: $$ S_2 \left( \begin{array}{llll} 1 & 0 & 1 & 0\\ 0 & 1 & 0 & 1\\ 1 & 0 & 1 & 1 \\ 1 & 1 & 0 & 0 \end{array} \right)+S_1 \left( \begin{array}{llll} 1 & 1 & 1 & 1\\ 1 & 1 & 1 & 0\\ 0 & 1 & 1 & 1 \\ 1 & 0 & 1 & 0 \end{array} \right)+X_1 \left( \begin{array}{llll} 0 & 1 & 0 & 1\\ 1 & 0 & 1 & 1\\ 1 & 1 & 0 & 0 \\ 0 & 1 & 1 & 0 \end{array} \right)+X_3 \left( \begin{array}{llll} 0 & 0 & 0 & 1\\ 1 & 0 & 0 & 1\\ 1 & 1 & 0 & 1 \\ 1 & 1 & 1 & 1 \end{array} \right)+X_5 \left( \begin{array}{llll} 0 & 0 & 1 & 0\\ 0 & 0 & 0 & 1\\ 1 & 0 & 0 & 1 \\ 1 & 1 & 0 & 1 \end{array} \right)= $$ $$ =(0,1,1,1)= X_2 \ . $$

The present section is devoted to mathematical backgrounds for RAID 6 technology.

... with unknown positions of errors

We address now a more complicated problem where the communication errors cannot be detected by the available hardware. We do not know the positions of the erroneous blocks, we are not assured even in the fact of the error occurence.

For a start, assume first that only one block can be damaged during the communication process but we do not know its position. In order to correct this potential failure, we anticipate the communication process of the row $ X=(X_1,X_2,X_3,X_4,X_5) $ with the syndrome computation $$ \left\{ \begin{array}{lllllcl} X_1& + X_2 &+ X_3& + X_4& + X_5&=&S_1, \\ X_1&+ X_2\mathfrak a&+ X_3 \mathfrak a^2&+ X_4\mathfrak a^3&+ X_5\mathfrak a^4&=&S_2. \end{array} \right. $$ Here $ \mathfrak a $ stands for an arbitrary element of $ \mathbf{GF}(16) $ with the only restriction that all its powers $ \{\mathfrak a^{\ell} \}_{\ell=0}^5 $ are distinct. (We do not care as yet in the particular representation of the field elements — is it a vector or polynomial or matrix form). Let the communication process of the row $ X=(X_1,X_2,X_3,X_4,X_5) $ possibly results in the damage of only one block $ X_{j} $, but the position $ j_{} $ of this block is unknown for us. Recalculation the syndrome values for the new row $ \tilde X=(\tilde X_1,\tilde X_2,\tilde X_3,\tilde X_4,\tilde X_5) $ yields $$ \left\{ \begin{array}{lllllcl} \tilde X_1& + \tilde X_2 &+ \tilde X_3& + \tilde X_4& + \tilde X_5 &=& \tilde S_1, \\ \tilde X_1 &+ \tilde X_2\mathfrak a&+ \tilde X_3 \mathfrak a^2&+ \tilde X_4\mathfrak a^3&+ \tilde X_5\mathfrak a^4&=& \tilde S_2. \end{array} \right. $$ Sum the corresponding equations of the initial and the final linear systems. Since $ \tilde X_i = X_i $ for all $ i \ne j $, we arrive at: $$ X_j + \tilde X_j = S_1+ \tilde S_1,\ (X_j + \tilde X_j) \mathfrak a^{j-1} = S_2+ \tilde S_2 \ . $$ If $ S_1 \ne \tilde S_1 $ then the error occurence is confirmed. Then $$ (S_1+ \tilde S_1)\mathfrak a^{j-1} = S_2+ \tilde S_2 \ $$ and the element $ \mathfrak a^{j-1} $ can be found from the last equation: $$ \mathfrak a^{j-1} = (S_2+ \tilde S_2)(S_1+ \tilde S_1)^{-1} \ ; $$ we just remind that all the involved operations (addition, multiplication, inversion) are performed in $ \mathbf{GF}(16) $. Thus, to find the error position $ j_{} $ one has to compute the right-hand side of the last equation and to search the value of the exponent $ j_{}-1 $ in the «Logarithm table» similar to the one presented in Section EXPONENTIATION (i.e. the discrete logarithm base $ \mathfrak a $). On evaluating $ j_{} $, the content of the erroneous block can be restored via the formula $$ X_j = \tilde X_j + (S_1+ \tilde S_1) \ . $$

Example. Let the communication process results in the row consisting of blocks $$ \tilde X_1=(1,0,1,0),\ \tilde X_2=(1,1,0,0),\ \tilde X_3=(1,1,1,1),\ \tilde X_4=(0,1,1,0),\ \tilde X_5=(0,0,0,1).\ $$ Assumig that exactly one of these blocks is erroneous, find its position and correct its content on the base of the syndrome values $$S_1= (0,1,0,0), \tilde S_1= (1,1,1,0),\ S_2= (0,0,0,1), \tilde S_2= (1,1,1,0) $$ within the just outlined scheme computed in the field $ \mathbf{GF}(16) $ arithmetic with $ \mathfrak a=(0,0,1,0)=x $ multiplication defined moduli $ 2,x^4+x+1 $.

Solution. $$ x^{j-1}=(1,1,1,1)\cdot(1,0,1,0)^{-1}=(1,1,1,1) \left(\begin{array}{rrrr} 1 & 1 & 1 & 1 \\ 1 & 1 & 1 & 0 \\ 0 & 1 & 1 & 1 \\ 1 & 0 & 1 & 0 \end{array} \right)^{-1}=(1,0,0,0)=x^3 \ . $$ Therefore $ j=4_{} $ with the true block content: $ X_4=(0,1,1,0)+(1,0,1,0)=(1,1,0,0) $. ♦

An extension of the suggested approach to the case of several erroneous blocks neads greater sophistication. Assusme now that the communication process for the information row $ X=(X_1,X_2,X_3,X_4,X_5) $ results in the damage of exactly two blocks and the positions $ j_{} $ and $ k_{} $ of these blocks is unknown for us. This time, the number of indeterminates in the problem increases to four ($ j,k,X_j,X_k $), and one may expect that, to determine their values, one is in need the same number of relations, i.e. we have to organize the computation of $ 4_{} $ syndrome values. Similar to the case of one error occurence, we suggest the new system of relations as follows: $$ \left\{ \begin{array}{lllllcl} X_1& + X_2 &+ X_3& + X_4& + X_5&=&S_1, \\ X_1&+ X_2\mathfrak a&+ X_3 \mathfrak a^2&+ X_4\mathfrak a^3&+ X_5\mathfrak a^4&=&S_2, \\ X_1&+ X_2\mathfrak a^2&+ X_3 \mathfrak a^4&+ X_4\mathfrak a^6&+ X_5\mathfrak a^8&=&S_3,\\ X_1&+ X_2\mathfrak a^3&+ X_3 \mathfrak a^6&+ X_4\mathfrak a^{9}&+ X_5\mathfrak a^{12}&=&S_4. \end{array} \right. $$ If the values of these syndromes for the resulting communication row $ \tilde X=(\tilde X_1, \tilde X_2,\tilde X_3,\tilde X_4,\tilde X_5) $ are equal to $ \{\tilde S_i\}_{i=1}^4 $ with at least one of them not coinciding with its former value, i.e. $ \tilde S_i \ne S_i $, then one gets the relationships for determining $ X_j,X_k $ as follows: $$ \left\{ \begin{array}{llcl} (X_j+\tilde X_j)& + (X_k+\tilde X_k) &=&S_1 +\tilde S_1, \\ (X_j+\tilde X_j)\mathfrak a^{j-1}& + (X_k+\tilde X_k)\mathfrak a^{k-1} &=&S_2 +\tilde S_2, \\ (X_j+\tilde X_j)\mathfrak a^{2(j-1)}& + (X_k+\tilde X_k)\mathfrak a^{2(k-1)} &=&S_3 +\tilde S_3, \\ (X_j+\tilde X_j)\mathfrak a^{3(j-1)}& + (X_k+\tilde X_k)\mathfrak a^{3(k-1)} &=&S_4 +\tilde S_4. \end{array} \right. $$ Let us first evaluate $ j_{} $ and $ k_{} $. Since these indeterminates are involved into the system in a nonlinear manner, we organize their computation via an intermediate evaluation of the elements $ \mathfrak a^{j-1} $ and $ \mathfrak a^{k-1} $. We intend to construct an equation over $ \mathbf{GF}(16) $ with the zeros conciding with these elements. We will first look for the quadric equation $$ \sigma_0\mathfrak e + \sigma_1\mathfrak x + \sigma_2\mathfrak x^2 = \mathfrak o $$ in the variable $ \mathfrak x $ with indeterminate coefficients $ \{ \sigma_0, \sigma_1, \sigma_2 \}\subset \mathbf{GF}(16) $. In order to determine the latter, multiply the equalities obtained from the equation via specializations $ \mathfrak x =\mathfrak a^{j-1} $ and $ \mathfrak x =\mathfrak a^{k-1} $ by appropriate elements from $ \mathbf{GF}(16) $ : $$ \left\{ \begin{array}{rl|llc} \sigma_0\mathfrak e+\sigma_1\mathfrak a^{j-1}+\sigma_2\mathfrak a^{2(j-1)}&=0, & \times (X_j+\tilde X_j) & & \\ & & & & + \\ \sigma_0\mathfrak e+\sigma_1\mathfrak a^{k-1}+\sigma_2\mathfrak a^{2(k-1)}&=0 & \times (X_k+\tilde X_k) & & \end{array} \right. $$ and add the results. Taking into account the above relationships, the resulting equation can be rewritten in the form: $$ \sigma_0(S_1 +\tilde S_1)+\sigma_1(S_2 +\tilde S_2)+\sigma_2(S_3 +\tilde S_3)= \mathfrak o \ . $$ To construct another equation, let us make a new cobination of the equations: $$ \left\{ \begin{array}{rl|llc} \sigma_0\mathfrak e+\sigma_1\mathfrak a^{j-1}+\sigma_2\mathfrak a^{2(j-1)}&=0, & \times (X_j+\tilde X_j)\mathfrak a^{j-1} & & \\ & & & & + \\ \sigma_0\mathfrak e+\sigma_1\mathfrak a^{k-1}+\sigma_2\mathfrak a^{2(k-1)}&=0 & \times (X_k+\tilde X_k)\mathfrak a^{k-1} & & \end{array} \right. $$ this results in $$ \sigma_0(S_2 +\tilde S_2)+\sigma_1(S_3 +\tilde S_3)+\sigma_2(S_4 +\tilde S_4)= \mathfrak o \ . $$ The pair of obtained equations permits one to determine the triple of coefficients $ \sigma_0, \sigma_1, \sigma_2 $ — up to a common multiple. The obtained equation can be represented in determinantal form as $$ \left| \begin{array}{ccc} S_1 +\tilde S_1 & S_2 +\tilde S_2 & S_3 +\tilde S_3 \\ S_2 +\tilde S_2 & S_3 +\tilde S_3 & S_4 +\tilde S_4 \\ 1 & \mathfrak x & \mathfrak x^2 \end{array} \right|= \mathfrak o \ . $$ If we succeed in finding the zeros (roots) $ \{\mathfrak x_1, \mathfrak x_2 \} $ for this equation then the error positions can be found from the equations $ \mathfrak a^{j-1}=\mathfrak x_1, \mathfrak a^{k-1}=\mathfrak x_2 $ with the aid of «Logarithm table» from Section ☞ EXPONENTIATION.

After evaluation of $ j_{} $ and $ k_{} $ the true values for the corrupted blocks are restored from the system of linear equations $$ \left\{ \begin{array}{llcl} (X_j+\tilde X_j)& + (X_k+\tilde X_k) &=&S_1 +\tilde S_1, \\ (X_j+\tilde X_j)\mathfrak x_1& + (X_k+\tilde X_k)\mathfrak x_2 &=&S_2 +\tilde S_2. \end{array} \right. $$

Example. Let the communication process results in the row consisting of blocks $$ \tilde X_1=(1,1,1,1),\ \tilde X_2=(1,1,1,1),\ \tilde X_3=(1,1,1,1),\ \tilde X_4=(1,0,1,1),\ \tilde X_5=(0,1,1,0)\ . $$ Under assumption that the number of erroneous blocks equals two, find their positions and correct their content using the following table for the syndrome values $$ \begin{array}{|c|c|c|c|c|} \hline j & 1 & 2 & 3 & 4 \\ \hline S_j & (0,1,0,0) & (0,1,1,1) & (1,1,0,1) & (0,1,1,0) \\ \hline & & & & \\ \tilde S_j & (0,0,1,0) & (0,1,1,0) & (0,1,0,0) & (0,0,0,0) \\ \hline \end{array} $$ computed in the Galois field from the previous example.

Solution. Compose the equation $$ \left| \begin{array}{ccc} S_1 +\tilde S_1 & S_2 +\tilde S_2 & S_3 +\tilde S_3 \\ S_2 +\tilde S_2 & S_3 +\tilde S_3 & S_4 +\tilde S_4 \\ 1 & \mathfrak x & \mathfrak x^2 \end{array} \right|= \mathfrak o \quad \iff \quad \left| \begin{array}{ccc} (0,1,1,0) & (0,0,0,1) & (1,0,0,1) \\ (0,0,0,1) & (1,0,0,1) & (0,1,1,0) \\ 1 & \mathfrak x & \mathfrak x^2 \end{array} \right|= \mathfrak o $$ $$ \iff \quad \mathfrak x^2 (0,0,1,0)+ \mathfrak x (1,1,1,0)+(1,0,1,1) = \mathfrak o \quad \iff \quad \mathfrak x^2 \cdot x + \mathfrak x \cdot x^{11}+ x^7 = \mathfrak o \ . $$ It can be verified that zeros of this equations are $ \mathfrak x_1 = x^2 $ and $ \mathfrak x_2 = x^4 $. Hence, $ j=3 $ and $ k=5 $ are the positions of the erroneous blocks.

Find now the values for $ X_3+\tilde X_3 $ and $ X_5+\tilde X_5 $ from the linear system $$ \left\{ \begin{array}{llcl} (X_3+\tilde X_3)& + (X_5+\tilde X_5) &=&x^2+x, \\ (X_3+\tilde X_3)x^2& + (X_5+\tilde X_5)x^4 &=&1. \end{array} \right. $$

Answer. $ X_3=(0,0,0,0), X_5=(1,1,1,1) $.

The reasoning of the preceding part of the present section contains an essential gap. It has been implicitly assumed that the communication process possibly ifluence just only the information blocks but not syndromes $ S_1,S_2,\dots $ Nevertheless the latter should also participate in the communication process and, therefore, should be treated as subjected to damage. The above suggested error correcting algorithm works in the assumption that both pre- and post-communication syndrome values are reliable. We do not expect the availability of special quality hardware for the integrity of these data, do we? — How to overcome then this trouble?